As the August 2026 enforcement deadline for high-risk AI systems looms, a critical blind spot has emerged in the corporate suite: the recruitment supply chain. Most organizations assume that by outsourcing talent acquisition to external agencies, they are also outsourcing the associated regulatory risks. This is a dangerous misconception.

In a landscape where algorithms now gatekeep professional opportunity, companies must understand that the legal, financial, and reputational exposure ultimately belongs to them - not the agency.

1. What the Regulation Actually Says

⚖ Annex III - High-Risk Classification

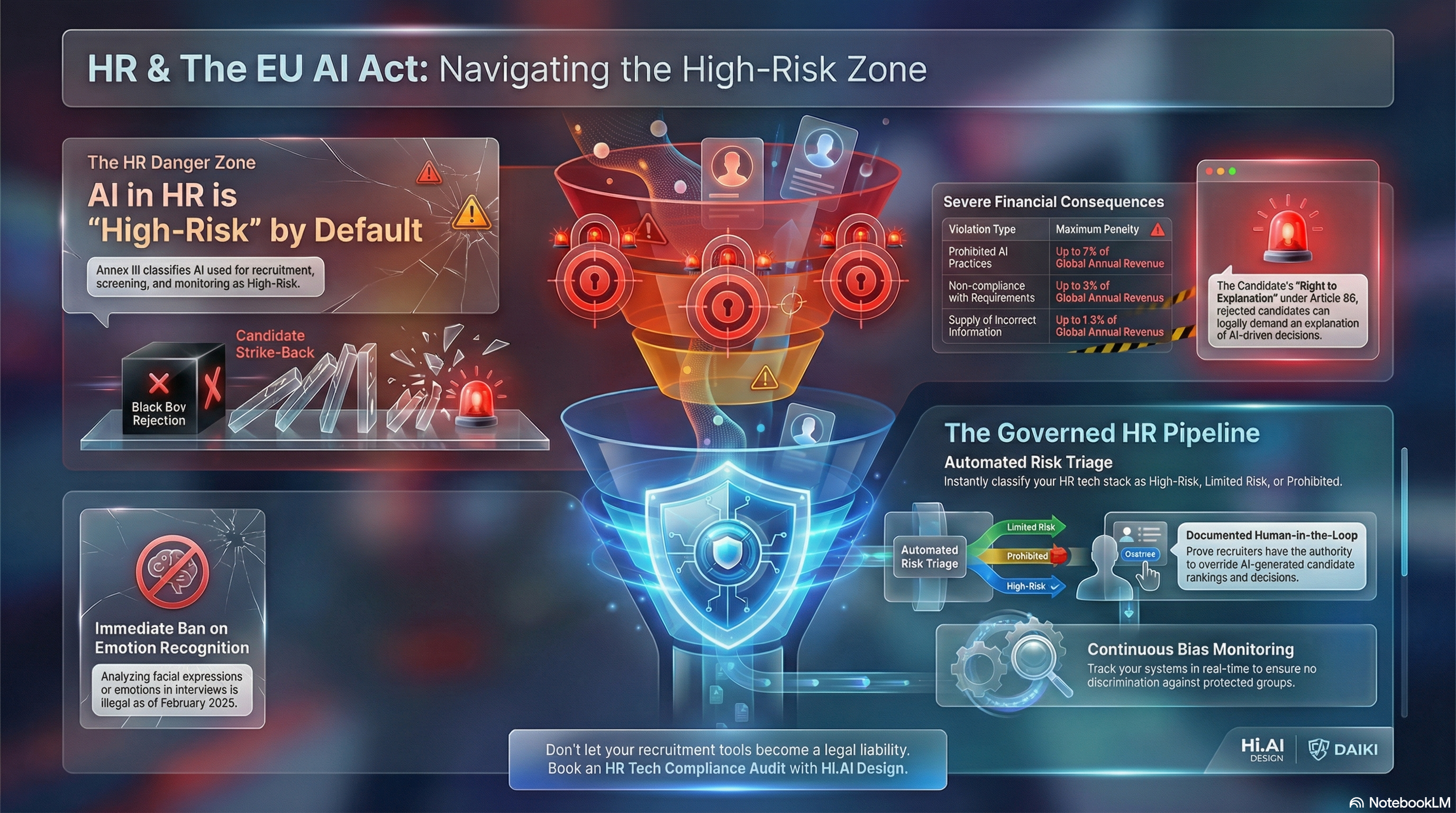

The EU AI Act is unequivocal regarding employment. Under Annex III, AI systems intended to be used for the recruitment or selection of persons - specifically for advertising vacancies, screening applications, and evaluating candidates - are classified as High-Risk.

While the recruitment agency often acts as the Deployer (the entity using the AI system under its authority as per Article 26), the company benefiting from that shortlist remains the primary target for discrimination claims and reputational damage.

📋 Article 86 - Right to Explanation

Article 86 grants candidates a statutory Right to Explanation. If a high-risk AI system played a role in their rejection, they have a legal right to a "clear and meaningful" explanation of the system's logic and the role of human oversight.

2. What Companies Are Getting Wrong

The market is currently operating on "passive trust," which is a significant liability. Companies are failing to recognize three critical realities:

❌ Liability is Non-Transferable

You cannot contract out of your obligations under EU anti-discrimination laws. If an agency's tool creates a biased shortlist, your company - the one making the final hiring decision - faces the blowback.

❌ The "Black Box" Defense is Dead

"We didn't know our agency used that tool" is no longer a valid legal defense. Under the principle of due diligence, organizations are expected to know the provenance of the candidates they interview.

❌ Prohibited Tech is Already in Use

Many agencies still utilize "personality scoring" or "emotion inference" from video interviews. Under Article 5, these systems are becoming prohibited (effective February 2025), carrying the highest tier of fines:

3. The Correct Approach: The SCOPE Audit

To protect your brand and budget, you must transition from passive trust to active governance. HI AI Design recommends an immediate audit of all third-party recruitment partners using the SCOPE lens:

S - System Inventory

Mandate that every agency disclose the specific AI tools used in your hiring funnel. No exceptions, no ambiguity.

✅

C - Compliance Verification

Require proof of Article 13 compliance (Instructions for Use) and Article 14 (Documented Human Oversight). If the agency cannot show you how a human can override the AI's ranking, the system is non-compliant.

O - Bias Monitoring (Oversight)

Demand regular, documented bias audits of the agency's tools to ensure they are not inadvertently filtering out protected groups - which would trigger local labor law violations alongside AI Act breaches.

P - Contractual Shielding (Protection)

Update your MSAs (Master Service Agreements) to include "AI Transparency Clauses," requiring agencies to provide the necessary data to satisfy an Article 86 "Right to Explanation" request within 48 hours.

E - Engagement & Disclosure

Ensure your careers page and candidate communications explicitly disclose the use of AI in the screening process to meet Article 50 transparency requirements. Silence from your suppliers is a red flag for your compliance team.

4. What to Do This Week

Take these steps immediately to map your exposure:

🚀 Immediate Action Plan

-

The Disclosure Letter

Send a formal request to all recruitment partners asking for a list of AI tools used for screening, ranking, or evaluating candidates. -

The "Human-in-the-Loop" Check

Ask your partners: "Who is the named person responsible for reviewing AI-generated rejections before they are sent?" -

Update Candidate Privacy Notices

Ensure your careers page and candidate communications explicitly disclose the use of AI in the screening process to meet Article 50 transparency requirements. -

Get a Daiki AIMs Package

AI management and monitoring software to track, classify, and govern every AI system in your recruitment pipeline. See the Daiki platform demo →

Is Your Recruitment Pipeline Compliant?

In 163 days the August 2026 deadline hits. If your agencies use AI to screen candidates, you need to know - now.